Leave us your email address and be the first to receive a notification when Robin posts a new blog.

Distributed Resource Scheduling (DRS) has been around since 2006, its main use case is to spread the VM workload over the ESXi servers in a vSphere Cluster. Over the past few years several improvements have been added to DRS, for example predictive DRS. Where vROPS helps DRS to make placements decisions. Now with vSphere 7 the look and feel of DRS has been completely renewed and even under the hood there are major changes.

So what has changed?

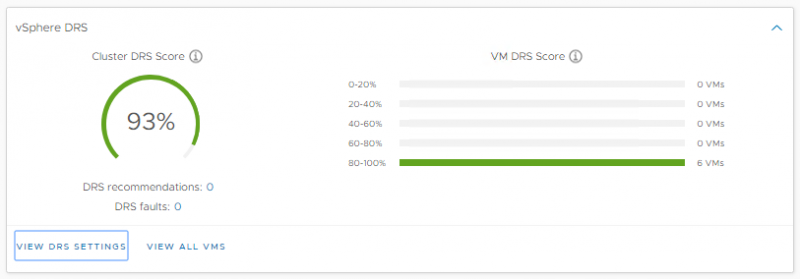

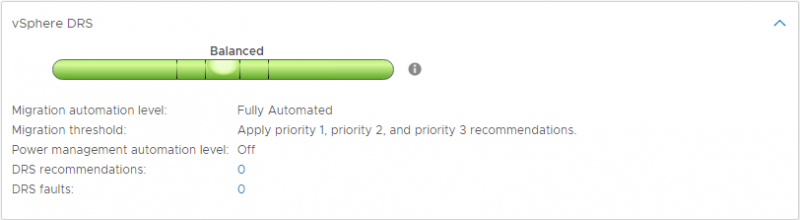

To get started; the Level as shown on the summary page has been replaced by a Score (shown in the picture above).

Because DRS has been designed to spread the load of VM’s over the cluster, DRS worked in a cluster centric manner. In vSphere 7 DRS has been rebuilt to work in a VM centric manner. This has been done by calculating a score for each VM. This score represents how ‘happy’ the VM is and is calculated based on metrics like CPU %ready time, good CPU cache behavior, memory swap etc. This score is calculated every minute whereas the old DRS level was calculated every 5 minutes. Next to the score a cost is calculated for moving the VM to another ESXi server. This new method of calculating the score of running VM's will provide for a better placement and the resource usage will keep an eye open for the happiness of the VM.

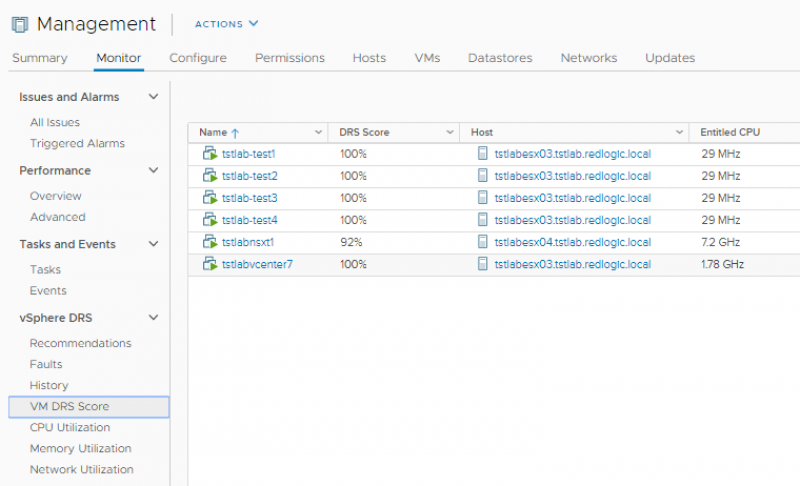

The DRS score for each VM can be viewed under the Monitoring Tab on a vSphere Cluster.

There isn’t much going on in our vSphere 7 Lab, so all we currently see are high scores.

Assignable Hardware

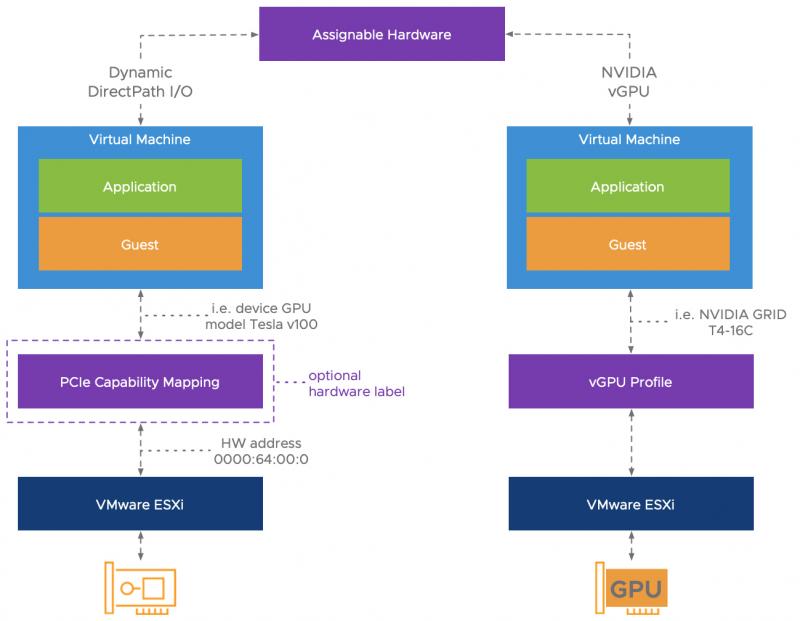

Another cool feature in vSphere 7 DRS is Assignable Hardware. When a VM is configured to use a physical device that supports the new Dynamic DirectPath I/O or NVIDIA vGPU profiles, for example a GPU. The DRS in vSphere 7 now do the initial placement, so that the VM powers-on on an ESXi server that has the configured hardware. vMotion is currently not supported for VM’s that are connected to a PCIe device.

Unfortunately, I don’t have the hardware to demo this feature but there are already some resources available. Like this video and blog post from Niels Hagoort.

Scalable Shares

The last important update, I want to point out, is Scalable Shares. Although not enabled by default, Scalable Shares allows to have dynamic & relative entitlements within resource pools. If you have used resource pools before you might know that, assigning a VM to a resource pool with high shares configured, isn’t a guarantee that the VM will receives more CPU cycles than a VM in a resource pool with low shares configured. Scalable Shares solves this though remember that methods like this only work when resources are shared.

In the RedLogic LAB I have created a resource pool with a limit of 8 Ghz CPU cycles. Within that resource pool I have created two resource pools; Production and Test. The Production resource pool has high shares (8000) and the Test resource pool has normal shares (4000) configured. In total there are 12000 shares to be divided, meaning 5,333 Ghz for Production and 2,667 Ghz for Test.

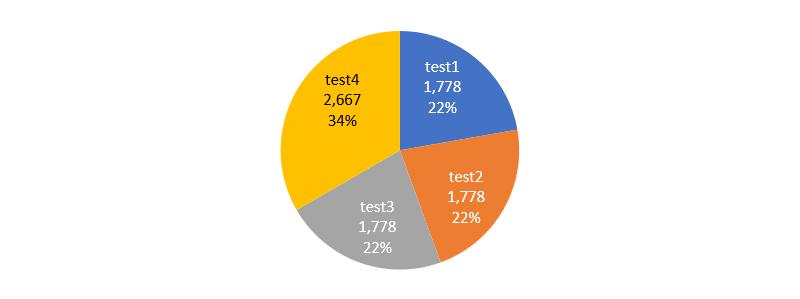

When there are more VM’s in the Production resource pool then the Test resource pool, the VM’s in the Test resource pool receive more CPU cycles. For this example I have placed 3 VM’s with high shares in the Production resource pool and 1 with normal shares in the Test resource pool. The CPU cycles during resource contention will be divided as follows:

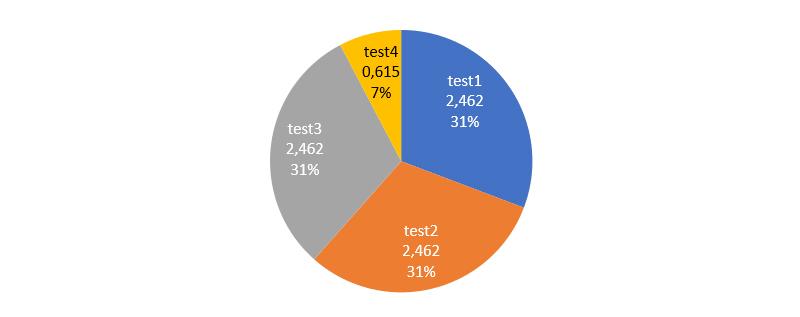

Scalable Share can solve this unbalance by calculating the shares over the entire root resource pool. With Shareable Shares enabled the CPU cycles during resource contention will be divided as follows:

As you can see the VM’s with high shares in the Production resource pool, also with high shares, now receive the most CPU cycles, as expected. From the look of it a very promising feature.

Would you like to know how this works and how the shares are calculated, then check out my next blog, in which I will show and explain how DRS calculates the shares with Shareable Shares enabled.

Questions, Remarks & Comments

If you have any questions and need more clarification, we are more than happy to dig deeper. Any comments are also appreciated. You can either post it online or send it directly to the author, it’s your choice.

LinkedIn

LinkedIn

Twitter

Twitter